By Walter Cunningham, 8/24/2010

The subjects and style of my writing attracts rebuttals. I usually resist the temptation to respond, but the article by Robert Curl, Kenneth S. Pitzer-Schlumberger Professor of Natural Sciences Emeritus at Rice University and Nobel Prize Winner in Chemistry is just too good to resist. His article is typical of academics with all those advanced degrees, and who know what is best for the rest of us - if we would just listen. In typical global warming alarmist fashion, it attacks the credentials, the economic interests or the politics (or all three) of those who disagree with them, and does not cite any empirical data that would prove their critics do not know what they are talking about.

After citing the National Academy of Sciences endorsement of AGW, Professor Curl emphasizes the credentials of the members and claims the NAS is “objective.” Maybe we’re supposed to ignore bad science if the perpetrators’ credentials are good. NAS is certainly a prestigious organization, but, along with several other scientific organizations lately, it has descended into the world of science politics.

What about the 31,000 scientists who signed the Oregon Petition, saying they DO NOT think humans are making a noticeable contribution to global warming, global cooling, climate change or catastrophic climate change?

Professor Curl suggests that we “download a layman’s explanation… from the National Academy’s website at . . . to obtain the most authoritative information on the subject.” The site is quite representative of the alarmists approach to “explanation. Instead of attempting to make the case, it treats AGW as a given. They do offer the reader several articles on dealing with human caused global warning as a “fact of life” Professor Curl’s “authoritative” source leaves a lot to be desired unless you are already a true believer in AGW.

Curl goes on to say, “all of humanity is faced with some difficult choices. No matter what we do, from a major course correction to doing nothing, it will be unpleasant and expensive.” Doesn’t that amount to an admission that even if we meet and exceed the CO2 cuts targeted by the IPCC, our temperature will not return to normal - whatever that is?

Curl is right when he says that any actions to restrict CO2 will be “unpleasant and expensive.” A reduction in CO2 will have to come from a reduction in fossil fuel use. President Obama has talked about reducing U.S. fossil fuel use by 80 percent by 2050. That would leave our projected population in 1950 with a fossil fuel energy consumption of 40 million Btu/year-per-capita - about equal to our per capita consumption back in 1880. Given the direct relationship between energy consumption and standard of living, such a move would be worse than “unpleasant.”

He does admit, “These sobering conclusions about future warming are projections based upon elaborate models of the Earth.” These are models built to prove their hypothesis that human caused CO2 is a dominant factor in controlling the Earth’s temperature. Models are built on assumptions, not data; assumptions are (or, should be) based on interpretation of empirical data. It is in the biased interpretation of historical data where we should be having a public debate. Models are not data.

An American Meteorological Society survey in November 2009, found that a full 62 percent disagreed or strongly disagreed with “Global climate models are reliable in their predictions for a warming of the planet.” In the same survey, 50 percent of the respondents disagreed or strongly disagreed with the IPCC assertion, “Most of the warming since 1950 is very likely human-induced.”

The real crisis today is that so many people seem bent on making decisions based on, as yet, unproven scientific hypotheses that will cost all of us dearly - in standard of living, not just dollars. All of that in case the miniscule contribution humans make to the tiny amount of CO2 in the atmosphere could devastate the planet in the next 50-100 years.

When the concern is serious enough, the question should be researched objectively and publically. Those climate scientists who have not bought into the alarmists radical hypothesis deserve the same access to government grants as the fear mongers who have absorbed most of the $30 billion the government has expended to support AGW in the last 20 years.

We cannot always judge what a man says by his credentials. What he understands and can explain is most important. Look past the credentials and consider the logic of their case - if they try to make one. Better yet, look at the data yourself. That doesn’t mean models, which have not accurately predicted anything to do with climate.

What is wrong with laying out the data in a common setting so each advocate can understand why the other can’t see the light. We should be relying on the best objective data to draw scientific conclusions. How many times in the last decade have you seen a proponent of the new global warming hypothesis willing to publically debate a climate scientist supporting the historical theory of our global climate?

For those who do not feel comfortable assessing the empirical data themselves, I recommend a paper that looks at how the historical data is used or misinterpreted to conclude that temperatures during our lifetime are unusual and that humans are responsible for temperature change. PDF The paper is by Burt Rutan, the engineer behind Spaceship One and Spaceship Two. Like me, Burt is not a climate scientist and neither of us has an axe to grind on the question of AGW. Burt looks at each of the arguments the alarmists use to frighten us into believing that if we do not severely limit human CO2 emissions, they will devastate our planet by the end of the 21st century.

Show me the data. If it supports the claims of global warming alarmists, I will be glad to help alert the public. Unfortunately, the data I have been reviewing for the last 20 years provide no such support. See post here.

Walter Cunningham received a Bachelor of Arts degree with honors in Physics in 1960. He received a Master of Arts degree in Physics in 1961 from the University of California at Los Angeles. He was lunar module pilot on the first manned Apollo mission (Apollo 7)

By Dr. Anthony Lupo

Early in 2010, all signs pointed toward a warmer summer in the middle Mississippi region [1], and this would be related to the weakening El Nino [2],[3]. The forecast, however, did not go far enough because we did not anticipate how quickly La Nina conditions would take hold [4]. When asked about the possibility of a warm summer, I reminded people that the last few summers have been relatively cool, so even a normal summer may seem warm.

As the summer moves into late August, I have heard many in the media and in the local general public wonder aloud about this summer being the consequences of anthropogenic global warming, and that this summer has been the hottest in recent decades [5]. Putting this summer into context locally* would demonstrate that while it is the hottest summer of the decade, and the warmest since 1980, it is only the 11th warmest overall in 120 years. Of the ten warmest summers nine of them occurred before 1960. Summers as of late have been cooler in our region.

While the years 2005-2007 were warmer than normal, these summers did not rank in among the top 20 for our region. This current summer follows a stretch of summers that have been cooler overall as four of the last eight have been below normal, some of these by quite a bit. The summer of 2004 and 2009 ranked as the 3rd and 9th coolest overall in our region, respectively.

Adding to the woes of this summer locally have been the relatively high dew points brought on by excessive precipitation in our region in the early part of the summer. Additionally, it has not been the maximum temperatures that have been the problem (we have failed to reach 100 degrees for the third consecutive year), it has been the consistently high minimum temperatures. While it is too early to tell what has happened nationwide, my guess is the story is much the same in other regions as well.

Globally, we have heard about the heat in Russia and the Middle East, and flooding in Pakistan. While this may seem like it is a consequence of climate change, this summer season is not unlike another recent summer, that of 2003. That year, it was warm summer that followed the relatively cold winter of 2002-2003. That year, like this one followed a weak El Nino event.

This El Nino that was different from others in that main sea surface temperature anomaly was located over the central tropical Pacific rather than the over the eastern Tropical Pacific. We [2], [3] have found that these type of weak El Ninos could be associated with colder winters over the eastern USA rather than warmer winters which are routinely forecast to occur. Additionally, we have found that as El Nino transitions back toward La Nina, a warm summer over North America is generally the result [2][3].

In 2003, as a warm summer set in, a strong heat wave also occurred over western Europe and many in France perished. This year, we have seen a repeat of these type of conditions as the Northern Hemisphere has been relatively warm. The excessive heat became established over Russia instead during this summer due to a phenomenon called blocking [6]. Blocking causes large-scale weather patterns to stagnate, and if a ridge is established over a continent during the summer, it will be hot. We have seen this type of activity over Europe in 2003, Alaska in 2004 [7], and now Russia in 2010[8].

Is this really the hottest summer globally? While some have reported that it is [8], an examination of the global weather as a whole would suggest it is not [9]. Lost in much of the noise has been the fact that in the Southern Hemisphere, especially South America, conditions have been much colder during their winter with unprecedented snows in many areas not used to them. There is also some speculation that this summer’s global warmth has been exaggerated by those with an agenda.

So, while this summer has seemed to be miserable compared to the last few, it has been much worse in the past, is due to natural phenomenon (not anthropogenic climate change), and thankfully the heat should be winding down as August wears on and September comes in. Additionally, it is hoped that the Russian heat wave will draw more attention to the weather phenomenon of blocking that is difficult to forecast [6], [7] but largely overlooked.

*Here we used the Columbia, MO temperatures, which are representative of much of central Missouri. The story has been similar elsewhere across the state. These summer temperatures are only complete as of 1 June to August 18.

[2] Lupo, A.R., Kelsey, E.P., D.K. Weitlich, I.I. Mokhov, F.A. Akyuz, Guinan, P.E., J.E. Woolard, 2007: Interannual and interdecadal variability in the predominant Pacific Region SST anomaly patterns and their impact on a local climate. Atmosfera, 20, 171- 196

[3] Lupo, A.R., E. P. Kelsey, D.K. Weitlich, N.A. Davis, and P.S. Market, 2008: Using the Monthly classification of

global SSTs and 500 hPa height anomalies to predict temperature and precipitation regimes one to two seasons in advance for the mid-Mississippi region. Nat. Wea. Dig., 32:1, 11-33

[6] Wiedenmann, J.M., A.R. Lupo, I.I. Mokhov, and E. Tikhonova, 2002: The Climatology of Blocking Anticyc- lones for the Northern and Southern Hemisphere: Block Intensity as a Diagnostic. J. Clim., 15, 3459-3473.

[7] Hussain, A., and A.R. Lupo, 2010: Scale and stability analysis of blocking events from 2002 to 2004: A case study of an unusually persistent blocking event leading to a heat wave in the Gulf of Alaska during August 2004. Advances in Meteorology, in press.

See PDF.

--------------

The Great Russian Heat Wave of 2010

World Climate Report

The longer and deadlier the heat wave in western Russia becomes, the more frequently it is being linked to anthropogenic global warming.

But global warming theory doesn’t come anywhere close to explaining why it’s so darn hot this summer in Moscow.

Long-term observations suggest a more basic cause - an unusual and unprecedented (at least since 1950) confluence of several naturally-occurring atmospheric circulation patterns that together combined to set the stage for extreme warmth. Add to that urbanization, changing forestry practices, and perhaps throw in a dash of global warming for good measure, and you take a situation that would otherwise be “very hot” and up it a notch to “record hot.”

The driving force of the 2010 heat wave has been a stationary weather system that has remained locked in place over western Russia since mid-June. The atmospheric is termed to be “blocked” when atmospheric circulation patterns remained fixed in place, instead of being progressive. The prolonged snow and cold in the eastern half of the U.S. last winter was caused by an atmospheric block which locked in a pattern which allowed arctic air to slide southward and storm systems to track up the east coast. The heat in Russia is caused by a blocking pattern which has locked in high pressure over Moscow and environs which favors southerly (warm) flow, a lot of sunshine, and little rain.

Atmospheric blocking is not unique to today’s climate. It is associated with atmospheric teleconnection patterns, described the National Weather Services Climate Prediction Center (CPC) as “and persistent, large-scale patterns of pressure and circulation anomalies that span vast geographical areas.” The CPC ‘s description continues:

Teleconnection patterns are also referred to as preferred modes of low-frequency (or long time scale) variability. Although these patterns typically last for several weeks to several months, they can sometimes be prominent for several consecutive years, thus reflecting an important part of both the interannual and interdecadal variability of the atmospheric circulation.

All teleconnection patterns are a naturally occurring aspect of our chaotic atmospheric system, and can arise primarily a reflection of internal atmospheric dynamics.

Teleconnection patterns reflect large-scale changes in the atmospheric wave and jet stream patterns, and influence temperature, rainfall, storm tracks, and jet stream location/ intensity over vast areas. Thus, they are often the culprit responsible for abnormal weather patterns occurring simultaneously over seemingly vast distances.

The CPC identifies and monitors about 10 different teleconnection indices, each with specific patterns of atmospheric circulation and associated impacts on surface temperatures and precipitation. The CPC has compiled the history of these teleconnection indices back to January 1950 and up through the present.

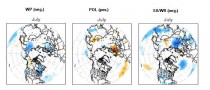

Several of the teleconnection patterns impact temperatures in the vicinity of western Russia, these include teleconnections named “West Pacific Pattern” (WP), “Polar/Eurasia Pattern” (POL), and the “East Atlantic/Western Russia Pattern” (EA/WR).

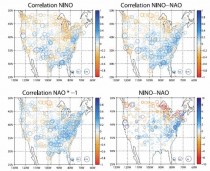

Figure 1 shows the July pattern of surface temperature anomalies when each of these three teleconnections is in their positive mode. When the teleconnections are in their negative mode, the temperature anomaly patterns are reversed. Notice that all three have “hot spots” in an around western Russia. The value of the WP, POL, and EA/WR indices for July, respectively were -2.93, 1.7, and -1.55. This means that each of these teleconnections was in a configuration that leads to higher than normal temperatures in western Russia.

Figure 1. Pattern of temperature anomalies that is associated with the positive mode of three teleconnections influencing western Russia (figures from the Climate Prediction Center). Enlarged here.

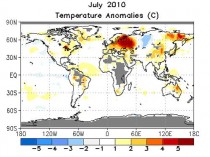

Figure 2 shows the observed surface temperature anomalies for July, which include the big bull’s eye of high temperatures over western Russia - an expected result of the chance combination of the three teleconnection patterns.

Figure 2. Surface temperature anomalies (C) for July, 2010 (figure from the Climate Prediction Center). Enlarged here.

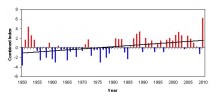

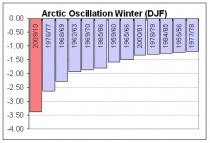

Figure 3 shows the timeseries of the combination (sum) of the July values for the WP, POL, and EA/WR teleconnection indices (we first flipped the sign of the WP and EA/WR indices so that the combined index reflects the same sign of the temperature anomalies over western Russia).

Figure 3. Combined index of the WP, POL, and EA/WR telectonnection values for the month of July, 1950-2010 (data from the CDC). Enlarged here.

There are several things of note:

1) The July 2010 combined value is the highest since 1950 - nearly 50% greater than the second highest value which occurred in 1952.

2) The combined index has been mostly positive since 1981, and mostly negative from 1955 through 1980. This behavior imparts an overall positive trend since 1950.

3) The 2010 value is about 3 times greater than the value expected based on the trend alone.

Is anthropogenic global warming behind any of this behavior?

It is hard to know for sure, but one thing that is certain is that if global warming does have a hand in the game (perhaps through the trend term), what it’s holding is pretty weak.

Figure 4 is a map of temperature anomalies for July 1936 - another very hot month in western Russia. Notice how the general pattern of temperature anomalies looks a lot like those from July 2010 (in Figure 2). This would suggest that the combined teleconnection index for July 1936 was also quite high - we don’t know how high, because the compiled data from the CPC only goes back to 1950. But clearly, it can get hot in Moscow and western Russia without our help.

Figure 4. Surface temperature anomalies (C) for July, 1936 (figure from the Goddard Institute for Space Studies).

The lack of data on teleconnections prior to 1950 limits the historical setting within which we can place the 2010 event. Would the increasing trend exhibited in Figure 3 look the same if we had that included the known warm period of the 1930s? Just how high was the combined teleconnection index in July of 1936?

We don’t know. And lacking that knowledge, it is hard to ascertain what role other factors such as urbanization, smoke, or global warming may have had in making this summer a record summer in western Russia.

On its own, the summer of 2010 would have gone down in history as an extremely hot one in Moscow, with or without any influence from enhanced greenhouse gas concentrations.

Did our changes to the environment, locally, regionally, or globally add even a bit more to what nature had in store already?

Possibly? Probably?

The jury is still way out on this one.

See post on this one.

ICECAP NOTE: Recall this same area had one of the coldest (in places said to be the coldest) winter on record this past winter, again as the blocking pattern locked in, then with the ridge in the North Atlantic. Enlarged here.

But then we were told that was weather and the extremes were consistent with AGW.

Scientists can now study climate change in far more detail with powerful new computer software released by the National Center for Atmospheric Research (NCAR) in Boulder, CO. The Community Earth System Model (CESM) will be one of the primary climate models used for the next assessment by the Intergovernmental Panel on Climate Change (IPCC).

CESM is the latest in a series of NCAR-based global models developed over the last 30 years. The models are jointly supported by the U.S. Department of Energy (DOE) and the National Science Foundation (NSF), which is NCAR’s sponsor. Scientists and engineers at NCAR, DOE laboratories, and several universities developed the CESM.

“The Community Earth System Model is yet another step toward representing improved physics and biogeochemistry in a coupled model,” says Anjuli Bamzai, program director in NSF’s Division of Atmospheric and Geospace Sciences, which funds NCAR.

“As our understanding of climate-relevant processes improves, it is imperative to represent these processes in the model.”

The new model’s advanced capabilities will help scientists shed new light on some of the critical mysteries of global warming, including:

What impact will warming temperatures have on the massive ice sheets in Greenland and Antarctica?

How will patterns in the ocean and atmosphere affect regional climate in coming decades?

What will be the likely interaction of climate change and tropical cyclones, including hurricanes?

How will tiny airborne particles, known as aerosols, affect clouds and temperatures?

CESM is one of about a dozen climate models worldwide that can be used to simulate the many components of Earth’s climate system, including the oceans, atmosphere, sea ice, and land cover. The model and its predecessors are unique in that they were developed by a broad community of scientists. CESM is freely available to researchers worldwide.

“With the Community Earth System Model, we can pursue scientific questions that we could not address previously,” says NCAR scientist James Hurrell, chair of the scientific steering committee that developed the model. “Thanks to its improved physics and expanded biogeochemistry, it gives us a better representation of the real world.”

Climate scientists rely on computer models to better understand Earth’s climate system because they cannot conduct large-scale experiments on the atmosphere itself. Climate models, like weather models, rely on a three-dimensional mesh that reaches high into the atmosphere and into the oceans. At regularly spaced intervals, or grid points, the models use laws of physics to compute atmospheric and environmental variables, simulating the exchanges among gases, particles, and energy across the atmosphere.

Because climate models cover far longer periods than weather models, they cannot include as much detail. Thus, climate projections appear on regional to global scales rather than local scales. This approach enables researchers to simulate global climate over years, decades or millennia. To verify a model’s accuracy, scientists typically simulate past conditions and then compare the model results to actual observations.

The CESM builds on the Community Climate System Model, which NCAR scientists and collaborators have regularly updated since first developing it more than a decade ago. The new model enables scientists to gain a broader picture of Earth’s climate system by incorporating more influences.

Using the CESM, researchers now can simulate the interaction of marine ecosystems with greenhouse gases; the climatic influence of ozone, dust, and other atmospheric chemicals; the cycling of carbon through the atmosphere, oceans, and land surfaces; and the influence of greenhouse gases on the upper atmosphere. In addition, an entirely new representation of atmospheric processes in CESM will allow researchers to pursue a much wider variety of applications, including studies of air quality and biogeochemical feedback mechanisms.

Scientists have begun using both the CESM and the Community Climate System Model for an ambitious set of climate experiments to be featured in the next IPCC assessment reports, scheduled for release during 2013-14. Most of the simulations in support of that assessment are scheduled to be completed and publicly released beginning in late 2010, so that the broader research community can complete its analyses in time for inclusion in the assessment.

The new IPCC report will include information on regional climate change in coming decades. Using the CESM, Hurrell and other scientists hope to learn more about ocean-atmosphere patterns such as the North Atlantic Oscillation and the Pacific Decadal Oscillation, which affect sea surface temperatures as well as atmospheric conditions. Such knowledge, Hurrell says, can eventually lead to forecasts spanning several years of potential weather impacts, such as a particular region facing a high probability of drought, or another region likely facing several years of cold and wet conditions.

“Decision makers in diverse arenas need to know the extent to which the climate events they see are the product of natural variability and, hence, can be expected to reverse at some point, or are the result of potentially irreversible, human-influenced climate change. CESM will be a major tool to address such questions.”

Icecap Note: the model obviously has built in global warming - I thought the models were by nature supposed to be totally objective and let the chips fall as they may. They did not promise ability to predict important multi decadal ocean cycles, changes in the thermohaline circulations and no mention of improvements in use of solar factors. AR4 models virtually ignored solar changes and forcing. It sounds like the same old GHG, ozone chemistry, aerosol driven model with more land/sea interaction run at a higher resolution. Don’t expect any breakthrough findings or leap in skill.

By Joseph D’Aleo, CCM

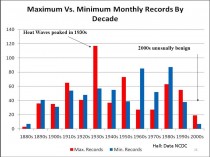

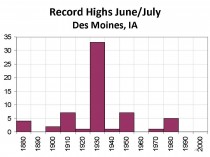

Last year, Bruce Hall plotted monthly heat and cold records for the 50 states. The 1930s showed up as by far the record decade over the last century (below, enlarged here).

This was in sharp contrast to the claims by NOAA, NCAR, the EPA and IPCC which all claimed the heat records were increasing at an alarming or accelerating rate.

We looked at Des Moines, Iowa, a continental climate that should reflect the heat more than coastal areas. We looked at the daily records in June and July. Again it was the 1930s that showed up as the clear winner. 1988 was a hot La Nina summer (similar to this year following an El Nino). It had 5 record highs. There have been no records after 1988 (below, enlarged here).

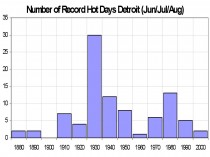

This morning I did a radio interview in Detroit. In preparation, I looked at record highs during the summer months of June, July and August. Again 1930s was the clear winner with 30 record highs. The warm era from the 1930s to the early 1950s had two and a half times the number of record highs the last three decades. Only two records were set this decade (2006) (below, enlarged here).

Though this summer has been a warm summer and may end up in the top ten warmest for Detroit, there have been no record heat days so far. There were 10 days with 90F temperatures in June and 2 this August. August was the warmest of the three summer months, mainly due to higher minimums due to more clouds and higher dewpoints thanks to moisture from air flowing over very wet grounds in WI and IA.

It has been indeed hot in the east and south central. But that was expected. It happens reliably when La Nina follows El Nino winters (1999, 1995, 1988, 1966) or after a El Nino with super blocking like this year (1977).

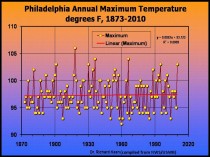

The heat in the east was intense in July but Dr Richard Keen showed how there is no trend in highest annual temperatures in Philadelphia since 1873 (below, enlarged here).

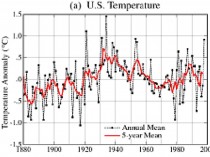

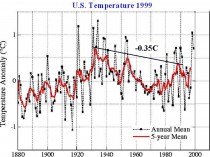

Back in 1999, when NOAA still was an honest broker about US temperatures, the USHCN temperatures looked like this (below, enlarged here).

James Hansen of NASA remarked about this data then: “The U.S. has warmed during the past century, but the warming hardly exceeds year-to-year variability. Indeed, in the U.S. the warmest decade was the 1930s and the warmest year was 1934.”

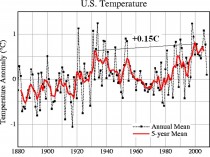

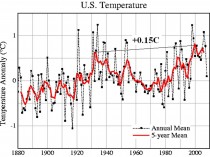

It showed the peak in the 1930s to 1940 was higher than the late century warmth. NOAA removed the urbanization adjustment in 2007 and suddenly the warmest decades became the recent ones (below, enlarged here).

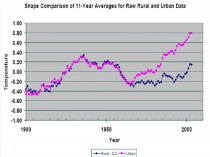

NASA’s Dr Edward Long looked at rural versus urban stations and found rural stations much more in line with the 1990s USHCN version (below, enlarged here).

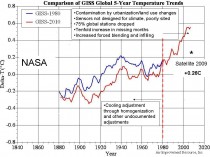

On a global scale, using the NASA version in 1980 compared to the NASA version in 2010, we see how efforts were made to not only show more warming in recent decades but suppress the warmth in the early to mid century (below, enlarged here).

So when you hear it proclaimed it was the warmest month, season, year or decade, remember that is in the world of manipulated data. Yes this was a warm summer in many places (in San Diego it has been the coldest spring and summer on record to date - averaging 5F below normal). But last winter some parts of the US and Asia had the coldest winter ever and many locations since 1977/78, 1962/63 or 1971/72 even in the manipulated data. Then we were told that was weather not climate and to be expected with global warming and to ignore it. Do the same with the pockets of extreme warmth this summer.....and expect, like they are experiencing in the Southern Hemisphere another cold winter although with La Nina more like 2007/08 and 2008/09 than last winter and a resumption of the multidecadal cooling that began in 2002.

See post here.

Oh and if you want another example of how NOAA is assuming an advocacy role, Heidi Cullen, formerly The Weather Channel’s leading global warming advocate now with George Soros funded Climate Central, an advocacy group sent to the Association of State Climatologists materials that promote and explain global warming that it compiled together with CICS, a NOAA cooperative institute on materials promoting AGW.

By Michael Marshall, New Scientist

UPDATE: Temperatures have turned quite cool (49F) in Moscow and showers in Pakistan become more scattered as the persistent block broke down.

Raging wildfires in western Russia have reportedly doubled average daily death rates in Moscow. Diluvial rains over northern Pakistan are surging south - the UN reports that 6 million have been affected by the resulting floods.

It now seems that these two apparently disconnected events have a common cause. They are linked to the heatwave that killed more than 60 in Japan, and the end of the warm spell in western Europe. The unusual weather in the US and Canada last month also has a similar cause.

According to meteorologists monitoring the atmosphere above the northern hemisphere, unusual holding patterns in the jet stream are to blame. As a result, weather systems sat still. Temperatures rocketed and rainfall reached extremes.

Renowned for its influence on European and Asian weather, the jet stream flows between 7 and 12 kilometres above ground. In its basic form it is a current of fast-moving air that bobs north and south as it rushes around the globe from west to east. Its wave-like shape is caused by Rossby waves - powerful spinning wind currents that push the jet stream alternately north and south like a giant game of pinball.

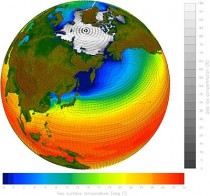

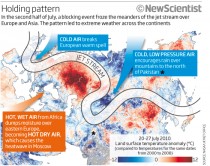

In recent weeks, meteorologists have noticed a change in the jet stream’s normal pattern. Its waves normally shift east, dragging weather systems along with it. But in mid-July they ground to a halt, says Mike Blackburn of the University of Reading, UK (see diagram). There was a similar pattern over the US in late June.

Stationary patterns in the jet stream are called “blocking events”. They are the consequence of strong Rossby waves, which push westward against the flow of the jet stream. They are normally overpowered by the jet stream’s eastward flow, but they can match it if they get strong enough. When this happens, the jet stream’s meanders hold steady, says Blackburn, creating the perfect conditions for extreme weather.

A static jet stream freezes in place the weather systems that sit inside the peaks and troughs of its meanders. Warm air to the south of the jet stream gets sucked north into the “peaks”. The “troughs” on the other hand, draw in cold, low-pressure air from the north. Normally, these systems a constantly on the move - but not during a blocking event. See below, enlarged here.

And so it was that Pakistan fell victim to torrents of rain. The blocking event coincided with the summer monsoon, bringing down additional rain on the mountains to the north of the country. It was the final straw for the Indus’s congested river bed (see “Thirst for Indus water upped flood risk").

Similarly, as the static jet stream snaked north over Russia, it pulled in a constant stream of hot air from Africa. The resulting heatwave is responsible for extensive drought and nearly 800 wildfires at the latest count. The same effect is probably responsible for the heatwave in Japan, which killed over 60 people in late July. At the same time, the blocking event put an end to unusually warm weather in western Europe.

Blocking events are not the preserve of Europe and Asia. Back in June, a similar pattern developed over the US, allowing a high-pressure system to sit over the eastern seaboard and push up the mercury. Meanwhile, the Midwest was bombarded by air from the north, with chilly effects. Instead of moving on in a matter of days, “the pattern persisted for more than a week”, says Deke Arndt of the US National Climatic Data Center in North Carolina.

So what is the root cause of all of this? Meteorologists are unsure. Climate change models predict that rising greenhouse gas concentrations in the atmosphere will drive up the number of extreme heat events. Whether this is because greenhouse gas concentrations are linked to blocking events or because of some other mechanism entirely is impossible to say. Gerald Meehl of the National Center for Atmospheric Research in Boulder, Colorado - who has done much of this modelling himself - points out that the resolution in climate models is too low to reproduce atmospheric patterns like blocking events. So they cannot say anything about whether or not their frequency will change. ICECAP NOTE: These models are worthless on any scale or time frame.

There is some tentative evidence that the sun may be involved. Earlier this year astrophysicist Mike Lockwood of the University of Reading, UK, showed that winter blocking events were more likely to happen over Europe when solar activity is low - triggering freezing winters (New Scientist, 17 April, p 6).

Now he says he has evidence from 350 years of historical records to show that low solar activity is also associated with summer blocking events (Environmental Research Letters, in press). “There’s enough evidence to suspect that the jet stream behaviour is being modulated by the sun,” he says.

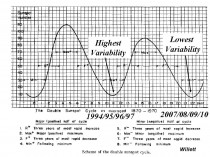

ICECAP NOTE: Hurd ‘Doc’ Willett of MIT presented at the 2nd Annual Northeast Storm Conference in 1978 a view of how the 22 year cycle affected weather patterns. His Hale Cycle work would have suggested the recent minimum 2007-2010 should be one of high “persistence” and thus low “variability”. This may have been augmented by the 106/213 year cycle concurrence. The patterns intraseasonally have been amazingly persistent since 2007. Willett passed in 1992. We are glad to see Professor Lockwood is back doing good science. See below, enlarged here.

Blackburn says that blocking events have been unusually common over the last three years, for instance, causing severe floods in the UK and heatwaves in eastern Europe in 2007. Solar activity has been low throughout.

Thirst for Indus water upped flood risk

It isn’t just heavy rain that is to blame for the current floods in Pakistan: water management has also exacerbated the risk of such events.

Both Pakistan and India depend heavily on the Indus river for their water needs. Since independence in 1947 Pakistan has virtually doubled the amount of land it irrigates with Indus water and the picture is similar for India. This thirst for water carries a heavy cost.

The Indus drains the Himalayan mountain chain and carries vast amounts of sediment. As more water is diverted into irrigation, the river flow has been severely reduced, and can’t now carry its accustomed cargo of sediment downstream. A growing number of levees and man-made channels also trap sediment on its way out to sea.

“More silt has been deposited into sand bars, reducing the capacity of the river,” says Daanish Mustafa, an expert on Indus water management at Kings College London, UK. “There is no doubt that infrastructure has exacerbated the flood risk significantly.

“Antiquated irrigation systems in Pakistan may also have made the problem worse. “Pakistan unfortunately has one of the worst irrigation efficiencies in the world,” says Uttam Sinha, a water security researcher from the Institute for Defence Studies and Analyses in New Delhi, India. Repairing the leaks and installing modern irrigation technology may help to reduce the flood risk in future. Kate Ravilious

See the New Scientist story here.

By Joseph D’Aleo

The Inspector General wrote on behalf of NOAA a response to Congressman Barton and Rohrabacher and the other committee members about the issues raised about the US climate data base (USHCN) (see attached letter and report here). They spoke with the NWS, NCDC, ATDD, several state climatologists, the AASC, the USGRP and the AMS to form their response. They examined quality control procedures, background documentation, operating procedures, budget requirements and management plans.

They examined the USHCN program to ensure that steps were taken to ensure quality control of the data. NOAA admitted to issues with siting, undocumented relocation, instrument changes, urbanization and missing data but claimed that these issues were being addressed in their new version 2 with its new ‘pal reviewed’ algorithm designed to detect “previously undisclosed inhomogenieties”.

The write up and description of the process of pal review is very amusing including an internal review, a mixed journal review with one reviewer claiming a number of issues had not been addressed. Ironicaly the reviews were apparently with one exception accidently eaten by the NOAA mascot dog and were unavailable to the Insprector General. Though there was one negative review, the Bulletin of the American Meteorological Society, not surprisingly given their advocacy goal, chose to publish it giving it ‘peer review’ status credit.

The inspector general spoke with several other individuals not directly involved in the review process and got comments from one that NOAA needed to explain the process more and a humorous claim by the chair of the Applied Climatology committee of the AMS that the developers of the new algorithm did a ‘fantastic job’ with the new algorithm.

All reviewers to their credit admitted there was a need for an improved climate data set not requiring the many adjustments made to the existing one. NOAA is requesting $100 million to implement a new national station network. This is so even though the pilot program in Alabama cost only $30,000 to implement and make operational. It’s only your tax dollars. Though they argued against what Anthony Watts, Roger Pielke Sr, I and others have claimed about the issues with their network, this is an tacit admission that these issues were real and in need of addressing (progress made).

THE VERSION 2 ALGORITHM

Despite intimation that the algorithm corrects for all the deficiencies ADMITTED by NOAA, the new algorithm really only address 2 well. It is a change point algorithm looking for discontinuities that would indicate previously undocumented station moves/land use change or instrument changes. It is not clear how many of these issues among the 1221 stations in the network were uncovered with the new algorithm.

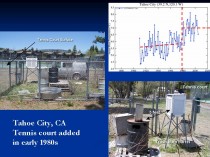

Here is an enlarged version of the example (courtesy of Anthony Watts surface station.org effort) of a land use change that the new algorithms ‘should’ have caught.

Here a tennis court was built around a station in Tahoe City CA. The enclosure around the Stevenson shelter, housing the sensor included a trash burn barrel within 5 feet. The data plot for Tahoe City reflected the change made around 1980.

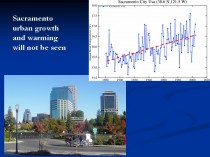

What is left uncovered and unaddressed are far worse issues, urbanization and bad siting. The new algorithms would never catch the following slow ramp up of temperatures you find in most cities and towns as they grow (as in Sacramento below, enlarged here. Oke (1973) noted that even small towns can develop a significant urban warm bias (population of just 1000 - 2C).

Nor would the new algorithm catch the slow degradation of siting as trees grow up and pavement, buildings or other heat sources slowly encroach around a station or the shelter is not properly maintained as in the Snake Creek, Utah one below enlarged here.

In actual fact version 2 took a step backwards by removing a previous urbanization adjustment. Far more stations both urban areas and even small to moderate size towns exhibited growth and many airports saw growth of the city around them. This introduced a warming trend in what had been a cooling linear trend since 1940 and about which even James Hansen admitted in 1999:

“The U.S. has warmed during the past century, but the warming hardly exceeds year-to-year variability. Indeed, in the U.S. the warmest decade was the 1930s and the warmest year was 1934.”

The temperature plot at that time in USHCN version 1 looked like this (enlarged here) with the recent warming significantly less than that around 1940.

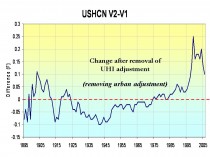

After removing the urban adjustment in 2007, the US plot looked like this (enlarged here.

The difference in the two is shown below (enlarged here):

This change introduced a cooling of the previous warm period from the 1910s to 1940s and a warming post 1985 but especially after 2000.

IS THE URBAN ADJUSTMENT REALLY NECESSARY?

Literally dozens of papers have documented it is. See our paper on Surface Temperature Records: A Policy Driven Deception for a lengthy discussion of it and of the siting issue which despite NCDC’s claims is not properly addressed for in the version 2. Almost every night your local television broadcaster will make a forecast like ‘lows tonight in town near 60 but down in the upper 40s the colder (more rural) spots.’

NOAA uses a paper by their own Tom Peterson who played statistical games with a data set and claimed it showed an urban adjustment was no longer needed.

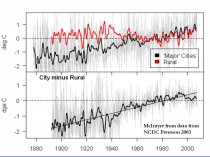

Steve McIntyre challenged NOAA’s Peterson (2003), who had said, “Contrary to generally accepted wisdom, no statistically significant impact of urbanization could be found in annual temperatures” by showing that the difference between urban and rural temperatures for Peterson’s own 2003 station set was 0.7C and between temperatures in large cities and rural areas 2C (below, enlarged here).

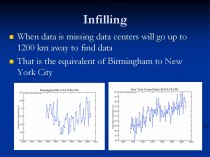

Despite the new algorithm which might catch a few station moves or changes that previously went undocumented, NOAA’s USHCN remains seriously flawed. In fact it is likely WORSE than it was a decade ago when it adjusted for urbanization. Ironically their global data set GHCN is even less trustworthy because it has huge holes in and much more missing monthly data requiring infilling, a process that may require using data from 1200km away to estimate the missing data (the equivalent of using New York City to fill in for missing months in Birmingham, Alabama) (below, enlarged here).

More to come. See PDF here.

By Paul Driessen

If 10% ethanol in gasoline is good, 15% (E15) will be even better. At least for some folks.

We’re certainly heading in that direction - thanks to animosity toward oil, natural gas and coal, fear-mongering about global warming, and superlative lobbying for “alternative,” “affordable,” “eco-friendly” biofuels. Whether the trend continues, and what unintended consequences will be unleashed, will depend on Corn Belt versus consumer politics and whether more people recognize the downsides of ethanol.

Federal laws currently require that fuel suppliers blend more and more ethanol into gasoline, until the annual total rises from 9 billion gallons of EtOH in 2008 to 36 billion in 2022. The national Renewable Fuel Standard (RFS) also mandates that corn-based ethanol tops out at 15 billion gallons a year, and the rest comes from “advanced biofuels” - fuels produced from switchgrass, forest products and other non-corn feedstocks, and having 50% lower lifecycle greenhouse gas emissions than petroleum.

These “advanced biofuels” thus far exist only on paper or in laboratories and demonstration projects. But Congress apparently believes passing a law will turn wishes into horses and mandates into reality. Create the demand, say ethanol activists, and the supply will follow. In plain-spoken English: Impose the mandates and provide sufficient subsidies, and ethanol producers will gladly “earn” billions growing crops, building facilities and distilling fuel.

Thus, ADM, Cargill, POET bio-energy and the Growth Energy coalition will benefit from RFS and other mandates, loan guarantees, tax credits and direct subsidies. Automobile and other manufacturers will sell new lines of vehicles and equipment to replace soon-to-be-obsolete models that cannot handle E15 blends. Lawmakers who nourish the arrangement will continue receiving hefty campaign contributions from Big Farma.

However, voter anger over subsidies and deficits bode ill for the status quo. So POET doubled its Capital Hill lobbying budget in 2010, and the ethanol industry has launched a full-court press to have the Senate, Congress and Environmental Protection Agency raise the ethanol-in-gasoline limit to 15% ASAP. As their anxiety levels have risen, some lobbyists are suggesting a compromise at 12% (E12).

Not surprisingly, ethanol activism is resisted by people on the other side of the ledger - those who will pay the tab, and those who worry about the environmental impacts of ethanol production and use.

* Taxpayer and free market advocates point to the billions being transferred from one class of citizens to another, while legislators and regulators lock up billions of barrels of oil, trillions of cubic feet of natural gas, and vast additional energy resources in onshore and offshore America. They note that ethanol costs 3.5 times as much as gasoline to produce, but contains only 65% as much energy per gallon as gasoline.

* Motorists, boaters, snowmobilers and outdoor power equipment users worry about safety and cost. The more ethanol there is in gasoline, the more often consumers have to fill up their tanks, the less value they get, and the more they must deal with repairs, replacements, lost earnings and productivity, and malfunctions that are inconvenient or even dangerous.

Ethanol burns hotter than gasoline. It collects water and corrodes plastic, rubber and soft metal parts. Older engines and systems may not be able to handle E15 or even E12, which could also increase emissions and adversely affect engine, fuel pump and sensor durability.

Home owners, landscapers and yard care workers who use 200 million lawn mowers, chainsaws, trimmers, blowers and other outdoor power gear want proof that parts won’t deteriorate and equipment won’t stall out, start inadvertently or catch fire. Drivers want proof that their car or motorcycle won’t conk out on congested highways or in the middle of nowhere, boat engines won’t die miles from land or in the face of a storm, and snowmobiles won’t sputter to a stop in some frigid wilderness.

All these people have a simple request: test E12 and E15 blends first. Wait until the Department of Energy and private sector assess these risks sufficiently, and issue a clean bill of health, before imposing new fuel standards. Safety first. Working stiff livelihoods second. Bigger profits for Big Farma and Mega Ethanol can wait. Some unexpected parties recently offered their support for more testing.

Representatives Henry Waxman (D-CA), Ed Markey (D-MA), Joe Barton (R-TX) and Fred Upton (R-MI) wrote to EPA Administrator Lisa Jackson, advising her that “Allowing the sale of renewable fuel… that damages equipment, shortens its life or requires costly repairs will likely cause a backlash against renewable fuels. It could also seriously undermine the agency’’s credibility in addressing engine fuel and engine issues in the future.”

* Corn growers will benefit from a higher ethanol RFS. However, government mandates mean higher prices for corn - and other grains, as corn and switchgrass incentives reduce farmland planted in wheat or rye. Thus, beef, pork, poultry and egg producers must pay more for corn-based feed; grocery manufacturers face higher prices for grains, eggs, meat and corn syrup; and folks who simply like affordable food cringe as their grocery bills go higher.

* Whether the issue is food, vehicles or equipment, blue collar, minority, elderly and middle class families would be disproportionately affected, Affordable Power Alliance co-chairman Harry Jackson, Jr. points out. They have to pay a larger portion of their smaller incomes for food, and own older cars and power equipment that would be particularly vulnerable to E15 fuels.

* Ethanol mandates also drive up the cost of food aid - so fewer malnourished, destitute people can be fed via USAID and World Food Organization programs.

Biotechnology will certainly help, by enabling farmers to produce more biofuel crops per acre, using fewer pesticides and utilizing no-till methods that reduce soil erosion, even under drought conditions. If only Greenpeace and other radical groups would cease battling this technology. However, there are legitimate environmental concerns.

* Oil, gas, coal and uranium extraction produces large quantities of high-density fuel for vehicles, equipment and power plants (to recharge batteries) from relatively small tracts of land. We could produce 670 billion gallons of oil from Arctic land equal to 1/20 of Washington, DC, if ANWR weren’t off limits.

By contrast, 15 billion gallons of corn-based ethanol requires cropland and wildlife habitat the size of Georgia, and for 21 billion gallons of advanced biofuel we’d need South Carolina planted in switchgrass.

* Ethanol has only two-thirds the energy value of gasoline ‘ and it takes 70% more energy to grow and harvest corn and turn it into EtOH than what it yields as a fuel. There is a “net energy loss,” says Cornell University agriculture professor David Pimental.

* Pimental and other analysts also calculate that growing and processing corn into ethanol requires over 8,000 gallons of water per gallon of alcohol fuel. Much of the water comes from already stressed aquifers - and growing the crops results in significant pesticide, herbicide and fertilizer runoff.

* Ethanol blends do little to reduce smog, and in fact result in more pollutants evaporating from gas tanks, says the National Academy of Sciences. As to preventing climate change, thousands of scientists doubt the human role, climate “crisis” claims and efficacy of biofuels in addressing the speculative problem.

Meanwhile, Congress remains intent on mandating low-water toilets and washing machines, and steadily expanding ethanol diktats. And EPA wants to crack down on dust from livestock, combine operations and tractors in farm fields.

“With Congress,” Will Rogers observed, “every time they make a joke it’s a law, and every time they make a law it’s a joke.” If it had been around in 1934, he would have added EPA. Let’s hope for some change. (PDF)

Paul Driessen is senior policy advisor for the Committee For A Constructive Tomorrow and Congress of Racial Equality, and author of Eco-Imperialism: Green power - Black death.

By Paul MacRae, August 5, 2010

The recent report by the National Oceanic and Atmospheric Administration shows that surface temperatures have increased in the past decade. In fact, the NOAA report, “State of the Climate in 2009,” says 2000-2009 was 0.2 Fahrenheit (0.11 Celsius) warmer than the decade previous. The press release was so splashy it made the front page of Toronto’s Globe and Mail with the headline: “Signs of warming earth ‘unmistakable’”.

Of course, given that the planet is in an interglacial period, we would expect “unmistakable” signs of warming, including melting glaciers and Arctic ice, rising temperatures, and rising sea levels. That’s what the planet does during an interglacial.

Furthermore, we’re nowhere near the peak reached by the interglacial of 125,000 years ago, when temperatures were 1-3C higher than today and sea levels up to 20 feet higher, according to the Intergovernmental Panel on Climate Change itself. In other words, the Globe might as well have had a headline reading “Signs of changing weather ‘unmistakable’.”

Similarly, the NOAA report laments: “People have spent thousands of years building society for one climate and now a new one is being created - one that’s warmer and more extreme.” The implication is that we can somehow freeze-dry the climate we’ve got to last forever, which is absurd.

Sea levels have risen 400 feet in the past 15,000 years, causing all kinds of inconvenience for humanity in the process-and all quite naturally. As the interglacial continues, sea levels will rise and temperatures will increase-until the interglacial reaches its peak, at which point the planet will again move toward glacial conditions. To think that we can somehow stop this process is insane.

Even die-hard alarmists admitted 2000-2009 cooling

But what about the NOAA claim that the surface temperature increased .11C during 2000-2009? Although they did everything possible to hide this information from the public, media, politicians, and even fellow scientists, by the late 2000s even die-hard alarmists were eventually forced to accept that the surface temperature record showed no warming as of the late 1990s, and some cooling as of about 2002. In other words, overall, for the first decade of the 21st century, there was no warming and even some cooling.

One of the consistent themes in the Climategate emails was consternation that the planet wasn’t warming as expected by the models (that is, about 0.2C per decade). For example, as early as 2005 the then head of the Climatic Research Unit (CRU), Phil Jones, wrote in an email: “The scientific community would come down on me in no uncertain terms if I said the world had cooled from 1998. OK it has but it is only seven years of data and it isn’t statistically significant.”

Fellow Climategate emailer and IPCC contributor Kevin Trenberth wrote to hockey-stick creator Michael Mann in 2009: “The fact is that we cannot account for the lack of warming at the moment and it’s a travesty that we can’t.” Note the date: 2009, the last year of the decade. As far as Trenberth knew-and he should have known as a leading IPCC author-the planet hadn’t warmed for several years up to that time.

Even Tim Flannery, author of the arch-alarmist The Weather Makers, acknowledged in November 2009: “In the last few years, where there hasn’t been a continuation of that warming trend, we don’t understand all of the factors that creates Earth’s climate, so there are some things we don’t understand, that’s what the scientists were emailing about. These people [the scientists] work with models, computer modeling. When the computer modeling and the real world data disagree you have a problem.”

Jones tries for climate honesty

Yes, you do have a problem, to the point where, in February 2010, after he’d been suspended as head of the CRU following the Climategate scandal, and in an attempt to restore his reputation as an honest scientist, Jones came a bit clean in an interview with the BBC. For example, Jones agreed with the BBC interviewer that there had been “no statistically significant warming” since 1995 (although he asserted that the warming was close to significant), whereas in his 2005 email he was at pains to hide the lack of warming from the public and even fellow researchers.

Jones admitted that from 2002-2009 the planet had been cooling slightly (-0.12C per decade), although he contended that “this trend is not statistically significant.” In short, as far as Jones knew in February 2010-and as the keeper of the Hadley-CRU surface temperature record he was surely in a very good position to know-the planet hadn’t warmed on average over the decade.

In the BBC interview, Jones calculated the overall surface temperature trend for 1975 to 2009 to be +0.16C per decade. Since that includes the warming years 1975-1998, it seems incredible that NOAA could manufacture a warming of 0.11C for 2000-2009, as shown in this graph from the 2009 NOAA report, page 5.

To show this level of warming, NOAA must have included lead-up to the January-March 2010 El Nino. A surge in warming at the end of the decade would tend to pull the 2000-2009 average up, but this doesn’t negate the fact that for almost all of the last decade, the planet did not warm.

(Note that the temperature is in Fahrenheit degrees. This caused much confusion in Canadian newspapers, including the Globe and Mail, the National Post, and most newspapers on the National Post’s Postmedia news network. All reported the increase as 0.2 Celsius rather than Fahrenheit, thereby doubling the already dubious warming claimed by NOAA. On Monday, Aug. 1, I sent letters to the Globe, National Post and Victoria Times Colonist pointing out this factual error. None of these newspapers has printed either the letter or a correction.)

NOAA’s U.S. temperatures contradict 2009 report

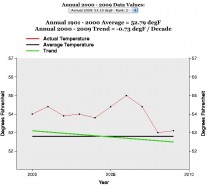

Curiously, another part of the NOAA website directly contradicts the NOAA report. On its site, NOAA offers a gadget that lets browsers check the temperature trend in the continental United States for any two years between 1895 and 2010. Here’s what the graph shows for the years 2000-2009 in the United States:

This graph shows a temperature decline of 0.73Fahrenheit (-0.4C) for 2000-2009 in the U.S. To get a perspective on how large a decline this is: the IPCC estimates that the temperature increase for the whole of the 20th century was 1.1F, or 0.6C. In other words, at least in the United States, the past decade’s cooling wiped out two-thirds of the temperature gain of the last century.

While the U.S. isn’t, of course, the whole world, it has the world’s best temperature records, and a review of the NOAA data since 1895 shows that in the 20th century the U.S. temperature trends mirrored, quite closely, the global temperature trends. So, for example, between 1940-1975, a global cooling period, the NOAA chart showed a temperature decline of 0.14F (-0.07C).

In other words, it stretches credulity to the breaking point to believe that the global temperature trend from 2000-2009 could be a full 0.51C - half a degree Celsius - higher than the temperature trend for the United States (that is, -.4C + .11C).

Until NOAA issues a correction (which isn’t likely), the cooling of the past decade - which has been such an embarrassment to the hypothesis that human-caused carbon emissions will cause runaway warming - is gone, conjured away by a wave of the NOAA climate fairy’s magic wand.

See compilation of scientist responses to NOAA’s report by SPPI here.

Icecap Note: the warm spring and hot summer for a lot of places is characteristic of a La Nina summer post an El Nino winter. Best example may be 1988. This was used by many forecasters to warn of a hot summer. The same hypocritical alarmists touting the extreme summer heat in places (one of the coldest summers in a century in the west), told us to ignore the coldest winters in decades and in places ever in the Northern Hemisphere last winter as that was weather not climate.

See a collection of respponses back to NOAA’s recent claims on SPPI here.

.

By Dr. Ross McKitrick

Summary

There are three main global temperature histories: the combined CRU-Hadley record (HADCRU), the NASA-GISS (GISTEMP) record, and the NOAA record. All three global averages depend on the same underlying land data archive, the Global Historical Climatology Network (GHCN). CRU and GISS supplement it with a small amount of additional data.

Because of this reliance on GHCN, its quality deficiencies will constrain the quality of all derived products. The number of weather stations providing data to GHCN plunged in 1990 and again in 2005. The sample size has fallen by over 75% from its peak in the early 1970s, and is now smaller than at any time since 1919. The collapse in sample size has not been spatially uniform. It has increased the relative fraction of data coming from airports to about 50 percent (up from about 30 percent in the 1970s). It has also reduced the average latitude of source data and removed relatively more high-altitude monitoring sites.

GHCN applies adjustments to try and correct for sampling discontinuities. These have tended to increase the warming trend over the 20th century. After 1990 the magnitude of the adjustments (positive and negative) gets implausibly large.

CRU has stated that about 98 percent of its input data are from GHCN. GISS also relies on GHCN with some additional US data from the USHCN network, and some additional Antarctic data sources. NOAA relies entirely on the GHCN network.

Oceanic data are based on sea surface temperature (SST) rather than marine air temperature (MAT). All three global products rely on SST series derived from the ICOADS archive, though the Hadley Centre switched to a real time network source after 1998, which may have caused a jump in that series. ICOADS observations were primarily obtained from ships that voluntarily monitored SST. Prior to the post-war era, coverage of the southern oceans and polar regions was very thin. Coverage has improved partly due to deployment of buoys, as well as use of satellites to support extrapolation. Ship-based readings changed over the 20th century from bucket-and-thermometer to engine-intake methods, leading to a warm bias as the new readings displaced the old. Until recently it was assumed that bucket methods disappeared after 1941, but this is now believed not to be the case, which may necessitate a major revision to the 20th century ocean record. Adjustments for equipment changes, trends in ship height, etc., have been large and are subject to continuing uncertainties. Relatively few studies have compared SST and MAT in places where both are available. There is evidence that SST trends overstate nearby MAT trends.

Processing methods to create global averages differ slightly among different groups, but they do not seem to make major differences, given the choice of input data. After 1980 the SST products have not trended upwards as much as land air temperature averages. The quality of data over land, namely the raw temperature data in GHCN, depends on the validity of adjustments for known problems due to urbanization and land-use change. The adequacy of these adjustments has been tested in three different ways, with two of the three finding evidence that they do not suffice to remove warming biases.

The overall conclusion of this report is that there are serious quality problems in the surface temperature data sets that call into question whether the global temperature history, especially over land, can be considered both continuous and precise. Users should be aware of these limitations, especially in policy sensitive applications.

See full report here.

Ross also notes the following on another paper:

You might be interested in a new paper I have coauthored with Steve McIntyre and Chad Herman, in press at Atmospheric Science Letters, which presents two methods developed in econometrics for testing trend equivalence between data sets and then applies them to a comparison of model projections and observations over the 1979-2009 interval in the tropical troposphere. One method is a panel regression with a heavily parameterized error covariance matrix, and the other uses a non-parametric covariance matrix from multivariate trend regressions. The former has the convenience that it is coded in standard software packages but is restrictive in handling higher-order autocorrelations, whereas the latter is robust to any form of autocorrelation but requires some special coding. I think both methods could find wide application in climatology questions.

The tropical troposphere issue is important because that is where climate models project a large, rapid response to greenhouse gas emissions. The 2006 CCSP report pointed to the lack of observed warming there as a “potentially serious inconsistency” between models and observations. The Douglass et al. and Santer et al. papers came to opposite conclusions about whether the discrepancy was statistically significant or not. We discuss methodological weaknesses in both papers. We also updated the data to 2009, whereas the earlier papers focused on data ending around 2000.

We find that the model trends are 2x larger than observations in the lower troposphere and 4x larger than in the mid-troposphere, and the trend differences at both layers are statistically significant (p<1%), suggestive of an inconsistency between models and observations. We also find the observed LT trend significant but not the MT trend.

If interested, you can access the pre-print, SI and data/code archive at my new weebly page.

See how these agree with many of the findings and conclusions in the compendium Surface Temperature Records: A Policy Driven Deception by Anthony Watts, E.M. Smith and I (and many others). See also further work on GHCN unedited here by E.M. Smith (Chiefio).

Investors Business Daily

Taxes: While the oil and gas companies are bearing the brunt of taxation, regulation and environmental angst, others are doing just fine, thank you. If you think cap-and-trade is dead, just follow the money.

According to a recently released Center for Responsive Politics review of reports filed with the U.S. Senate and U.S. House, General Electric and its subsidiaries spent more than $9.5 million on federal lobbying from April to June - the most it’s spent on lobbying since President Obama has been in office.

Why? As the fight over cap-and-trade grows, so does lobbying. Since January, GE and its units have spent more than $17.6 million on lobbying - a jump of 50% over the first six months of 2009.

GE is just one of many organizations and individuals that stand to make money if cap-and-trade makes it through Congress. GE makes wind turbines, not oil rigs, and has a vested interest in shutting down its fossil fuel competitors.

In an Aug. 19, 2009 e-mail obtained by Steve Milloy of JunkScience.com, General Electric Vice Chairman John Rice called on his GE co-workers to join the General Electric Political Action Committee “to collectively help support candidates who share the values and goals of GE.”

And what are those goals, and just what has GEPAC accomplished thus far? “On climate change,” Rice wrote, “we were able to work closely with key authors of the Waxman-Markey climate and energy bill, recently passed by the House of Representatives. If this bill is enacted into law, it will benefit many GE businesses.”

GE is a member of the U.S. Climate Action Partnership, which advocates cap-and-trade legislation and leads the drive for reductions of so-called greenhouse gases. One of its subsidiaries was involved in Hopenhagen, a campaign by a group of businesses to build support for the recent Copenhagen Climate Conference, which was supposed to come up with a successor to the failed Kyoto Accords.

To be fair, coal and gas companies lobby too, both out of self-preservation and self-interest.

But they produce a useful product that creates jobs and boosts GDP. Alternative energy, even after huge subsidies, adds little to our energy mix. Evidence suggests alternative energy is a net job loser, siphoning resources from productive areas of the economy.

Renewable energy sources like wind, solar energy and biomass total only 3% of our energy mix. Spain’s experience is that for each “green” job created, 2.2 jobs are lost due to the siphoning off of resources that private industry needed to grow.

There’s money to be made in climate change even if the climate doesn’t change, and the profit motive may now be the main driver of cap-and-trade.

The Chicago Climate Exchange (CCX) was formed to buy and sell carbon credits, the currency of cap-and-trade. Founder Richard Sandor estimates the climate trading market could be “a $10 trillion dollar market.”

It could very well be if cap-and-trade legislation like Waxman-Markey and Kerry-Boxer are signed into law, making energy prices necessarily skyrocket, and as companies buy and trade permits to emit those six “greenhouse” gases.

As we have written, profiteering off climate change hysteria is a growth industry as well as a means to the end goal of fundamentally transforming America, as the President has said was his goal.

Czech President Vaclav Klaus has called climate change a religion whose zealots seek the establishment of government control over the means of production. It reminds him, he said, of the totalitarianism he once endured.

After the Climate-gate scandal broke, Lord Christopher Monckton, a former science adviser to British Prime Minister Margaret Thatcher, said of the scientists at Britain’s Climate Research Unit at the University of East Anglia and those they worked with: “They’re criminals.” He also called them “huckstering snake-oil salesmen and ‘global warming’ profiteers.”

Like the scientists who lived off the grant money they received from scaring us to death with manipulated data, others hope to profit off perhaps the greatest scam of all time. See this important post here.

Global Temperature And Data Distortions ContinueBy Dr. Tim Ball

Recent reports claim June was the warmest on record, but it seems to fly in the face of reports of record cold from around the world.

Reports from Australia say, “Sydney recorded its coldest June morning today since 1949, with temperatures diving to 4.3 degrees just before 6:00 a.m. (AEST).” “Experts say it is unusual to see such widespread cold weather in June.”

In the southern hemisphere reports of cold have appeared frequently but rarely make the mainstream media. “The Peruvian government has declared a state of emergency in more than half the country due to cold weather.” “This week Peru’s capital, Lima, recorded its lowest temperatures in 46 years at 8C, and the emergency measures apply to several of its outlying districts.”

“In Peru’s hot and humid Amazon region, temperatures dropped as low as 9C. The jungle region has recorded five cold spells this year. Hundreds of people - nearly half of them very young children - have died of cold-related diseases, such as pneumonia, in Peru’s mountainous south where temperatures can plummet at night to -20C.” “A brutal and historical cold snap has so far caused 80 deaths in South America, according to international news agencies. Temperatures have been much below normal for over a week in vast areas of the continent.”

“It snowed in nearly all the provinces of Argentina, an extremely rare event. It snowed even in the western part of the province of Buenos Aires and Southern Santa Fe, in cities at sea level.” (Source)

Evidence of the cold is reflected in the fact that Antarctic ice is continuing to reach record levels. “Antarctic sea ice peaks at third highest in the satellite record”.

The same contradictory evidence is happening in the Arctic. They claimed the most dramatic warming was occurring in the Arctic but this contradicts what the ice is doing. Ice continues its normal melt of the summer with a slowing rate slowed in the months of June and July. (Figure 1). The red line is for 2010.

So where are the stories coming from? It goes back to the manipulation of temperature data by the two main generators the Goddard Institute of Space Studies (GISS) and the Hadley Climate Research Unit (HadCrut). They use data provided by individual countries of the World Meteorological Organization. This is supposedly raw data, but in fact it has already been adjusted for various presumed local anomalies.

But the arctic warming is even more problematic. The Arctic Climate Impact Assessment (ACIA) is the source of data for Intergovernmental Panel on Climate Change yet it tells us there is no data for the entire Arctic Ocean Basin. Figure 2 shows the diagram from their report.

Figure 2: Weather stations for the Arctic.

Source: Arctic Climate Impact Assessment Report

This is why the map showing temperature for the Arctic shows “No data” for the region (Figure 3).

Figure 3: Temperatures for the period 1954 to 2003. They give the source as the CRU. Source: Arctic Climate Impact Assessment Report

So how do they determine that the Arctic is warming at all, let alone more rapidly than other regions? The answer is, with GISS at least, they use computer models to extrapolate. They do this by assuming that a weather station record is valid for a 1200 km region. Figure 4 shows the 1200 km smoothing results for the Arctic region (The green circle is 80N latitude.) showing the interpolation of southern weather stations to Arctic. Source: wattsupwiththat.com

Then we see what happens when the interpolation or smoothing is done using a more reasonable 250 km (Figure 5). Temperature pattern using a 250 km range for single station data. Source: wattsupwiththat.com

None of this is surprising because GISS have consistently distorted the record always to amplify warming. The problem of data adjustment is best illustrated by comparing the results of GISS and Hadcrut (Figure 6).

Figure 6: Comparison of Global temperature record. Source: Steve Goddard, WUWT.

The Hadcrut data shows what Phil Jones, former Director of the Climatic Research Unit (CRU) confirmed to the BBC that global temperatures have not increased since 1998. However, the GISS data shows a slight warming over the period and a significant increase from 2007. How can two records both using the same weather data achieve such different conclusions? The simple answer is they use different stations and adjust them differently, especially for such things as the Urban Heat Island Effect (UHIE).

There is another problem.

The number of stations used to produce a global average was significantly reduced in 1990 and this affected temperature estimates as Ross McKitrick showed (Figure 7). He wrote, “The temperature average in the above graph is unprocessed. Graphs of the ‘Global Temperature’ from places like GISS and CRU reflect attempts to correct for, among other things, the loss of stations within grid cells, so they don’t show the same jump at 1990.” McKitrick got the idea for the problem from an article by meteorologist Joe D’Aleo (2002).

The challenge is to produce meaningful long-term records from such interrupted data, but that is not the only problem because the loss of stations is not uniform. “The loss in stations was not uniform around the world. Most stations were lost in the former Soviet Union, China, Africa and South America."This is may explain the distortions currently occurring because it adds to the distortions that already exist toward eastern North American and western European stations. The pattern of temperatures of the Northern Hemisphere in the early spring and summer saw heat in eastern North America and Western Europe. There is a greater density of weather stations in these regions and they have the greatest heat island effect. The rest of the Northern Hemisphere and the Southern Hemisphere had cooler conditions but in the deliberately distorted record this was minimized.

McKitrick, Essex and Andersen, in “Does a global temperature exist?"concluded, “he purpose of this paper was to explain the fundamental meaninglessness of so-called global temperature data.” “But nature is not obliged to respect our statistical conventions and conceptual shortcuts.”

That is clearly the case this year and it confirms Alfred Whitehead’s observation that, “There is no more common error than to assume that, because prolonged and accurate calculations have been made, the application of the result to some fact of nature is absolutely certain”.

Read and see more here.

By Dennis Ambler

As we are told of yet another “hottest year on record”, our daily news reports are full of “hot testimony”, for example the heat wave in Moscow, Russia is described in this report:

“The heat has caused asphalt to melt, boosted sales of air conditioners, ventilators, ice cream and beverages, and pushed grain prices up. Environmentalists are blaming the abnormally dry spell on climate change.

On ‘black’ Saturday, temperatures in Moscow hit a record high of 38 degrees Celsius with little relief at night, making this July the hottest month in 130 years. The average temperature in central Russia is 9 degrees above the seasonal norm.”

As usual, WWF regard this as proof of global warming,

“Certainly, such a long period of hot weather in unusual for central Russia. But the global tendency proves that in future, such climate abnormalities will become only more frequent”, says Alexey Kokorin, the Head of Climate and Energy Program of the World Wide Fund (WWF) Russia.

He fails, of course, to say what caused the previous heat wave of similar magnitude 130 years previously, as was mentioned in the report. Such is the nature of environmental reporting these days, that such questions equally do not arise in the minds of those willing reporters who swallow every crumb of global warming thrown to them. Never ever mentioned are historical instances such as the seven month long European heat wave of 1540, when the River Rhine dried up and the bed of the River Seine in Paris was used as a thoroughfare.

It seems to be axiomatic, that whilst reports such as the Moscow Heat wave make the headlines, there is scant reporting in the popular media of the severe cold in the South American winter, with loss of life and livelihoods, due to temperatures in some places reaching minus 15 celsius, or 5 deg F.

“The polar wave that has trapped the Southern Cone of South America has caused an estimated one hundred deaths and killed thousands of cattle, according to the latest reports on Monday from Argentina, south of Brazil, Uruguay, Paraguay, Chile and Bolivia.

Even the east of the country which is mostly sub-tropical climate has been exposed to frosts and almost zero freezing temperatures.”

But not to worry, it is still global warming, as explained by the Moscow head of WWF:

“I think that the heat we are suffering from now as well as very low temperatures we had this winter, are hydro-meteorological tendencies that are equally harmful for us as they both were caused by human impact on weather and the greenhouse effect which has grown steadily for the past 30-40 years.”

The “cold is hot” approach can be traced back to a working document by the UK Tyndall Centre for Climate Change back in 2004, when they said:

* Only the experience of positive temperature anomalies will be registered as indication of change, if the issue is framed as global warming.